System Design isn't just about drawing boxes and arrows on a whiteboard. It's about predicting how things will inevitably break and engineering around those failures.

When you scale from a single server to a distributed network of thousands of machines, failure is no longer a possibility—it is a mathematical certainty. Drawing upon the foundational concepts from textbooks like Designing Data-Intensive Applications (DDIA), System Design Interview, and Software Architecture: The Hard Parts, let's explore how systems actually fail in the real world.

When one node goes down, how does the rest of the cluster respond?

When one node goes down, how does the rest of the cluster respond?

1. The Single Point of Failure (SPOF)

The most basic, yet most devastating, failure mode is the Single Point of Failure (SPOF). This happens when an entire system's availability relies on one specific component.

Imagine a massive highway (your network traffic) that suddenly merges into a single, one-lane toll booth (your single server). It doesn't matter how fast the cars are driving; the toll booth becomes an insurmountable bottleneck.

Traffic bottlenecking at a critical, un-replicated node.

Traffic bottlenecking at a critical, un-replicated node.

The Concept: In system design, any piece of architecture without redundancy is a SPOF. This could be a primary database without a replica, a load balancer without a failover, or even a single domain name registrar. The solution? Redundancy. We scale horizontally, deploying multiple instances behind a load balancer so that if one dies, traffic effortlessly routes to the survivors.

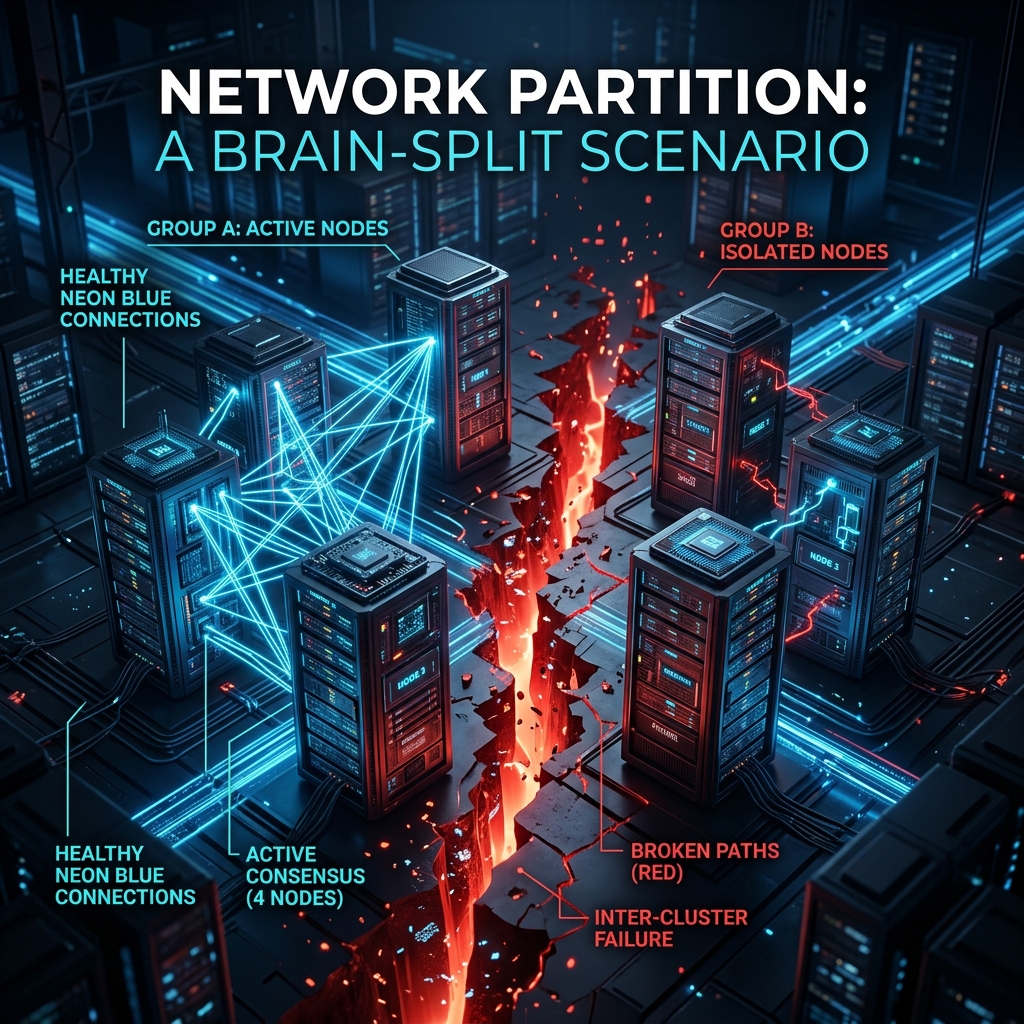

2. Network Partitions & The Split-Brain Problem

When we talk about distributed systems, we must acknowledge the "Fallacies of Distributed Computing." The biggest fallacy? That the network is reliable.

In Designing Data-Intensive Applications (DDIA), Martin Kleppmann highlights the danger of Network Partitions. A partition occurs when the network connecting two halves of your system simply drops.

A digital fault line severing communication between two database clusters.

A digital fault line severing communication between two database clusters.

The Concept: Imagine two managers running a busy restaurant, communicating via walkie-talkie. If their walkie-talkies break (network partition), they can no longer synchronize. If Manager A accepts a reservation for Table 5, and Manager B accepts a reservation for the exact same table, you have a conflict.

In a database, this is the Split-Brain Problem. The CAP Theorem dictates that during a network partition, you must choose between Consistency (refusing to accept new writes until the network heals) or Availability (accepting writes on both sides and risking conflicting data).

3. The Danger of Clock Skew

Another hard lesson from DDIA: You cannot trust time.

In a distributed system, every server has its own internal quartz clock. Due to temperature changes and minor hardware differences, these clocks drift apart. One server might think it's 12:00:01, while another thinks it's 12:00:05.

The Concept: If Server A writes a record at 12:00:02 (according to its clock), and Server B writes an update at 12:00:01 (according to its slower clock), the database might process Server B's write as the "newer" one and silently overwrite Server A's data, even though Server A's write actually happened later in the physical world! This is why distributed systems rely on logical clocks (like Vector Clocks) instead of wall-clock time to determine the strict ordering of events.

4. The Microservice Trap: The Distributed Monolith

Sam Newman (Monolith to Microservices) and Neal Ford (Software Architecture: The Hard Parts) frequently warn about the dangers of splitting architectures too aggressively.

Microservices promise agility, but if you split your domains incorrectly, you don't get independent services. Instead, you create a tangled, highly-coupled mess called a Distributed Monolith.

A tangled web of synchronous microservice dependencies.

A tangled web of synchronous microservice dependencies.

The Concept: If Service A cannot complete a user request without synchronously calling Service B, which calls Service C, which calls Service D... you haven't decoupled anything. You've simply taken in-memory function calls and replaced them with slow, unreliable network calls. If Service D fails, the entire chain collapses. This is why asynchronous event-driven architectures (like Kafka) and careful Domain-Driven Design (DDD) boundaries are critical to surviving microservices.

Conclusion: Designing for Failure

Systems don't fail because engineers are careless; they fail because complexity scales exponentially. The mark of a senior architect isn't building a system that never fails—it's building a system that fails gracefully.

By anticipating SPOFs, respecting network partitions, doubting system clocks, and managing microservice boundaries, we move from writing code to truly engineering systems.