Imagine a bustling corporate office in the 1990s. Every time the Sales department makes a deal, they have to physically walk a piece of paper over to the Accounting department. Then, they have to walk another copy to the Shipping department. When Shipping sends the package, they walk a confirmation slip back to Sales, and another to Accounting.

As the company grows, more departments are added: Analytics, Customer Support, Marketing. Suddenly, the hallways are jammed with employees running point-to-point, trying to keep everyone synchronized. Papers get lost, people wait on hold, and the entire system becomes a slow, tangled mess.

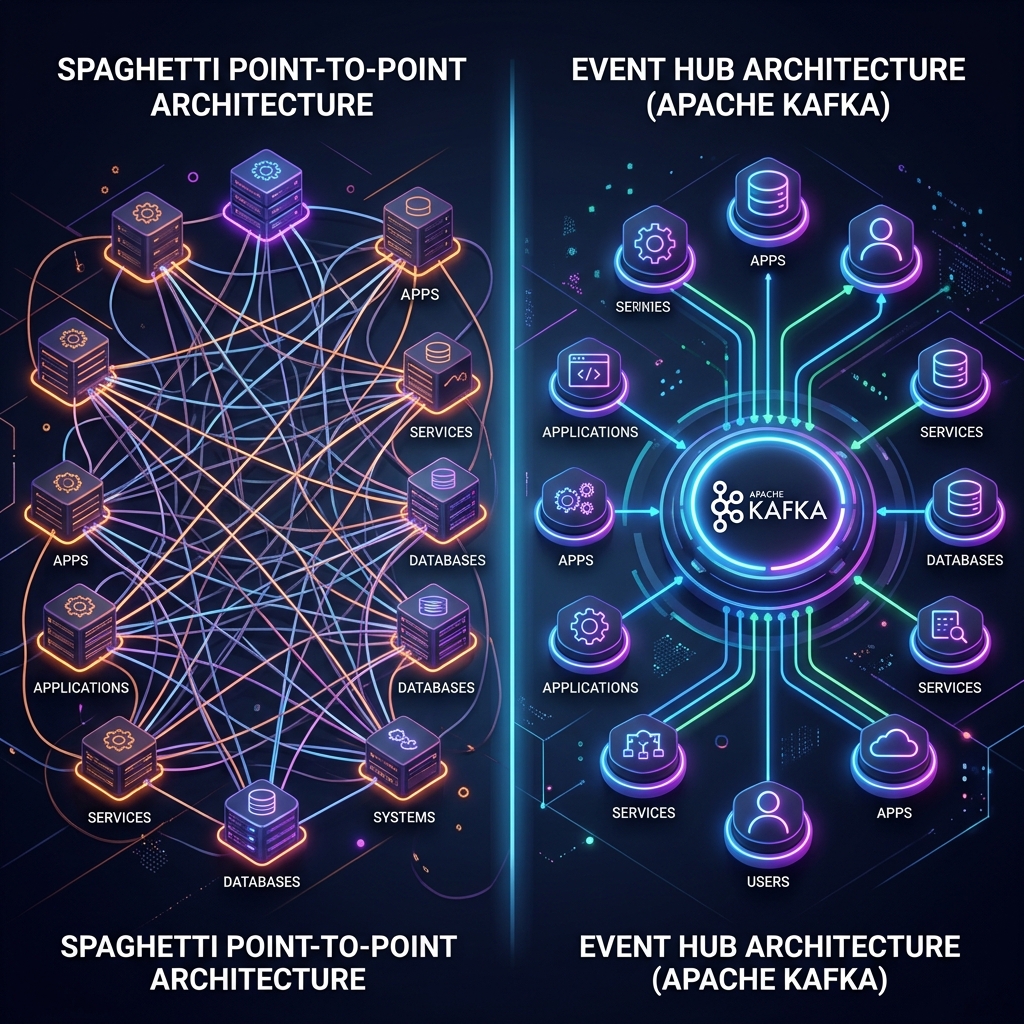

In software architecture, this is known as the point-to-point integration problem. As your system grows from two microservices to twenty, the number of direct connections explodes, creating a fragile "spaghetti" architecture.

Enter Apache Kafka.

To understand how Kafka completely revolutionized data engineering, I've applied the Richard Feynman Technique: breaking down the highly technical concepts from definitive Kafka literature into simple, intuitive analogies.

Let's dive in.

1. The End of Spaghetti Architecture

In our office analogy, the solution isn't to make the employees run faster. The solution is to build a central Post Office.

When Sales closes a deal, they don't walk to Accounting or Shipping. They simply drop a single "Deal Closed" memo into the central Post Office. Accounting and Shipping, whenever they are ready, walk to the Post Office and read the memos they care about.

Kafka replaces the chaotic N x M point-to-point connections with a clean, centralized event streaming hub.

Kafka replaces the chaotic N x M point-to-point connections with a clean, centralized event streaming hub.

The Concept: Apache Kafka acts as the central nervous system for your data. Instead of databases, microservices, and analytics engines talking directly to each other, they all talk to Kafka. Systems that produce data (Producers) write to Kafka, and systems that consume data (Consumers) read from Kafka. This decouples the architecture, allowing you to add new services without rewiring the old ones.

2. The Anatomy of a Topic: The Infinite Ledger

So, how does this Post Office actually store the messages?

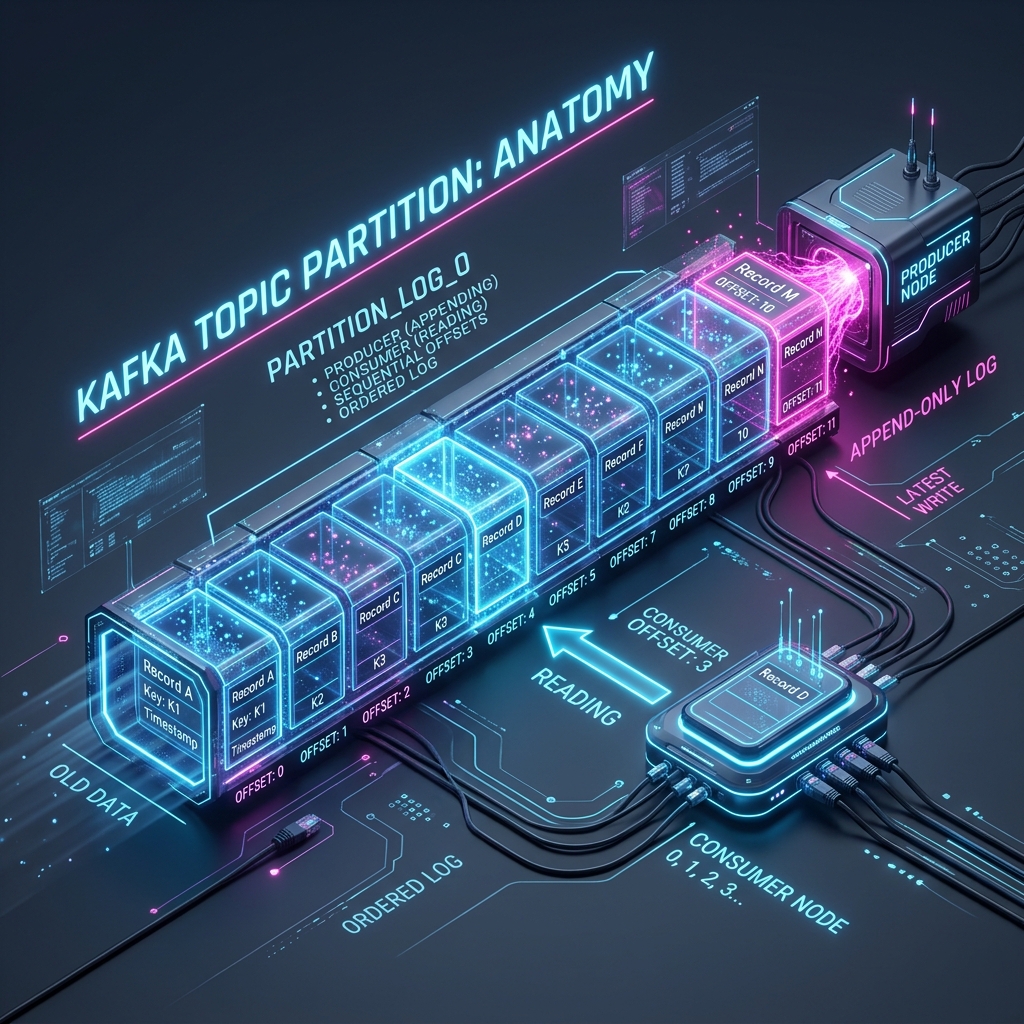

Imagine a massive, indestructible Logbook. Every time a message arrives, a scribe writes it on the very last line of the book, numbers it, and stamps the time. Once a line is written, it can never be changed or erased (immutability).

In Kafka, this logbook is called a Topic. But if a single logbook gets too big, one scribe can't write fast enough, and readers will crowd around it. So, Kafka tears the logbook into multiple smaller notebooks called Partitions.

A Partition is simply an append-only log. Data goes in at the end, and readers track their position using Offsets.

A Partition is simply an append-only log. Data goes in at the end, and readers track their position using Offsets.

The Concept:

- Topic: A category or feed name to which records are published (e.g.,

user_clicks,payment_transactions). - Partition: To scale horizontally, a Topic is divided into Partitions. Each Partition is an append-only, ordered log stored on disk.

- Offset: Every message in a partition is assigned a unique sequential ID called an Offset. It acts as a bookmark. A consumer remembers its last read Offset, so if it crashes and restarts, it knows exactly where to resume reading—meaning no data is lost or skipped.

3. Horizontal Scaling: Consumer Groups

Let's say the payment_transactions Topic is receiving 10,000 messages a second. A single Accounting clerk (a Consumer) reading those messages will quickly fall behind.

We need to hire a team of clerks. But if they all read the same messages, they'll process payments twice! How do we organize them?

We put them in a Consumer Group.

Horizontal scaling in action: A Consumer Group automatically divides the workload by assigning different partitions to different nodes.

Horizontal scaling in action: A Consumer Group automatically divides the workload by assigning different partitions to different nodes.

The Concept: A Consumer Group is a collection of consumers working together to read a Topic. Kafka guarantees that each partition is read by only one consumer within a group.

If your Topic has 3 partitions, and you start a Consumer Group with 3 consumers, Kafka automatically assigns one partition to each consumer. They process data perfectly in parallel. If a consumer crashes, Kafka automatically reassigns its partition to a surviving consumer (a process called Rebalancing). This is the secret sauce to Kafka's massive scalability.

4. The Distributed Post Office: Brokers and Clusters

Kafka doesn't run on a single machine—that would be a single point of failure. It runs as a cluster of multiple servers, called Brokers.

Imagine having three Post Office buildings in different cities. If one burns down, the mail shouldn't be lost. Kafka achieves this through Replication.

For every partition, Kafka elects one Broker as the Leader and others as Followers. All reading and writing goes through the Leader. The Followers simply copy everything the Leader does in real-time. If the Leader machine experiences hardware failure, Kafka's consensus mechanism (traditionally ZooKeeper, now shifting to the internal KRaft protocol) instantly promotes a Follower to become the new Leader.

The application keeps running, and no data is lost.

5. Beyond Storage: Kafka Streams and ksqlDB

Kafka is exceptional at moving and storing data. But what if you want to transform the data as it flows?

Imagine our Post Office has a special assembly line. Instead of just storing memos, a robotic arm grabs "Deal Closed" memos, looks up the customer's history from a database, merges the information, and drops a brand new "Enriched Deal" memo into a different pile.

The Concept: This is Stream Processing. Kafka Streams is a Java library that allows developers to build robust applications that process data in real-time as it flows through Kafka topics. If writing Java code is too heavy, ksqlDB allows you to perform these same transformations using simple, familiar SQL queries on moving data streams. You can filter, aggregate, and join real-time streams as easily as querying a traditional relational database.

The Verdict

Apache Kafka is more than just a message queue; it is a distributed, fault-tolerant, highly scalable event streaming platform. By treating data not as static rows in a database, but as a continuous, immutable stream of events, Kafka allows organizations to build resilient architectures that can handle massive throughput while remaining perfectly synchronized.

It is the beating heart of modern system design.

References & Further Reading

This post synthesizes concepts from the core literature on event streaming:

- Kafka: The Definitive Guide (2nd Edition) by Gwen Shapira, Todd Palino, Rajini Sivaram, and Kriti Pramod.

- Mastering Kafka Streams and ksqlDB by Mitch Seymour.

- Making Sense of Stream Processing by Martin Kleppmann.