Serverless is one of those buzzwords that gets thrown around constantly in modern software engineering. If you read the marketing materials, it sounds like some kind of magic that eliminates all your infrastructure problems. On the other hand, if you read internet forums, it sounds like an overcomplicated, overpriced nightmare.

But what does "Serverless" actually mean?

Let's use the Richard Feynman technique—simplifying a complex concept by breaking it down into everyday analogies—to understand what Serverless really is, where it came from, and why it matters.

1. The Evolution of Hosting: From Bedrooms to the Cloud

To understand Serverless, we first have to understand what came before it. Let's look at the evolution of how we host software.

From physical hardware to invisible utility computing.

From physical hardware to invisible utility computing.

- Physical Servers: In the beginning, you literally owned a computer. You bought it, plugged it in, and connected it to the internet. If the power went out, your site went down.

- Colocation: You realized your bedroom wasn't a great place for a server, so you started renting space in a professional data center. You still bought the server, but they provided reliable power and internet.

- Virtualization / Cloud: Instead of buying physical hardware, you rented "virtual" computers (Virtual Machines) from companies like Amazon or Google. You could get a new server in minutes instead of weeks.

- Platform as a Service (PaaS): Companies started offering platforms where you just upload your code, and they handle the virtual machines and web servers for you.

- Serverless: The final evolution. The servers become entirely invisible. You just write small functions, and the cloud provider runs them, scales them, and manages everything automatically.

(Wait, so there are still servers in Serverless? Yes! The "-less" in Serverless doesn't mean "without servers." It means "servers are invisible to you." Just like "wireless" internet still uses wires, it's just that your device doesn't have a wire plugged into it.)

2. The Restaurant vs. The Food Truck

The best way to understand the core benefit of Serverless is billing and utilization.

Imagine you want to start selling burgers.

You rent a traditional restaurant building. You hire a chef. You pay for electricity, water, and rent. During the lunch rush, you are slammed. But at 3:00 AM? Your restaurant is completely empty. Your chef is sitting there, bored. The lights are on. You are burning money. You pay rent whether you have 100 customers or zero.

This is exactly how traditional servers work. You rent a server, and you pay for it 24/7, even when no one is visiting your website.

With Serverless, you only pay exactly for what you consume.

With Serverless, you only pay exactly for what you consume.

Now, imagine a magical Food Truck on Demand. When a customer walks up and asks for a burger, the truck pops into existence, cooks the burger, hands it over, and then vanishes. This is Serverless.

With Serverless, you don't pay "rent" for an idling server. You only pay for the exact milliseconds that your code is actually running. If nobody visits your website for a month, your hosting bill is exactly $0.00.

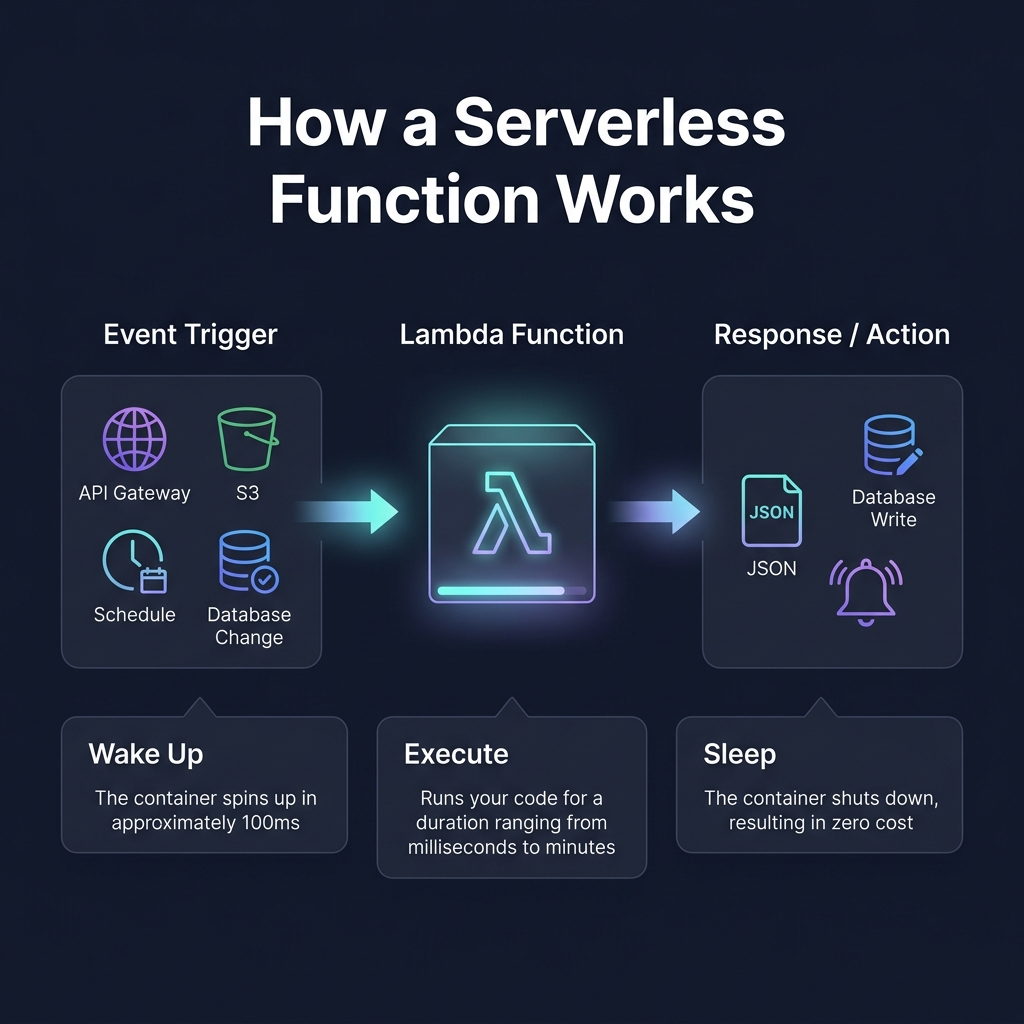

3. How a Serverless Function Actually Works

In a Serverless architecture, you don't build one giant application. Instead, you write tiny snippets of code called Functions (like AWS Lambda).

These functions are designed to do exactly one thing, and they only wake up when something explicitly triggers them.

A function wakes up, does its job, and goes back to sleep.

A function wakes up, does its job, and goes back to sleep.

Here is the lifecycle of a Serverless function:

- The Trigger (Event): Something happens. A user clicks a button, a file is uploaded, or a scheduled timer goes off.

- The Wake Up: The cloud provider spins up a tiny, invisible container for your code.

- The Execution: Your code runs. It might read from a database, process some data, or return a JSON response.

- The Sleep: Once the function finishes returning the response, the container is destroyed. You stop paying.

4. The Event-Driven Workshop

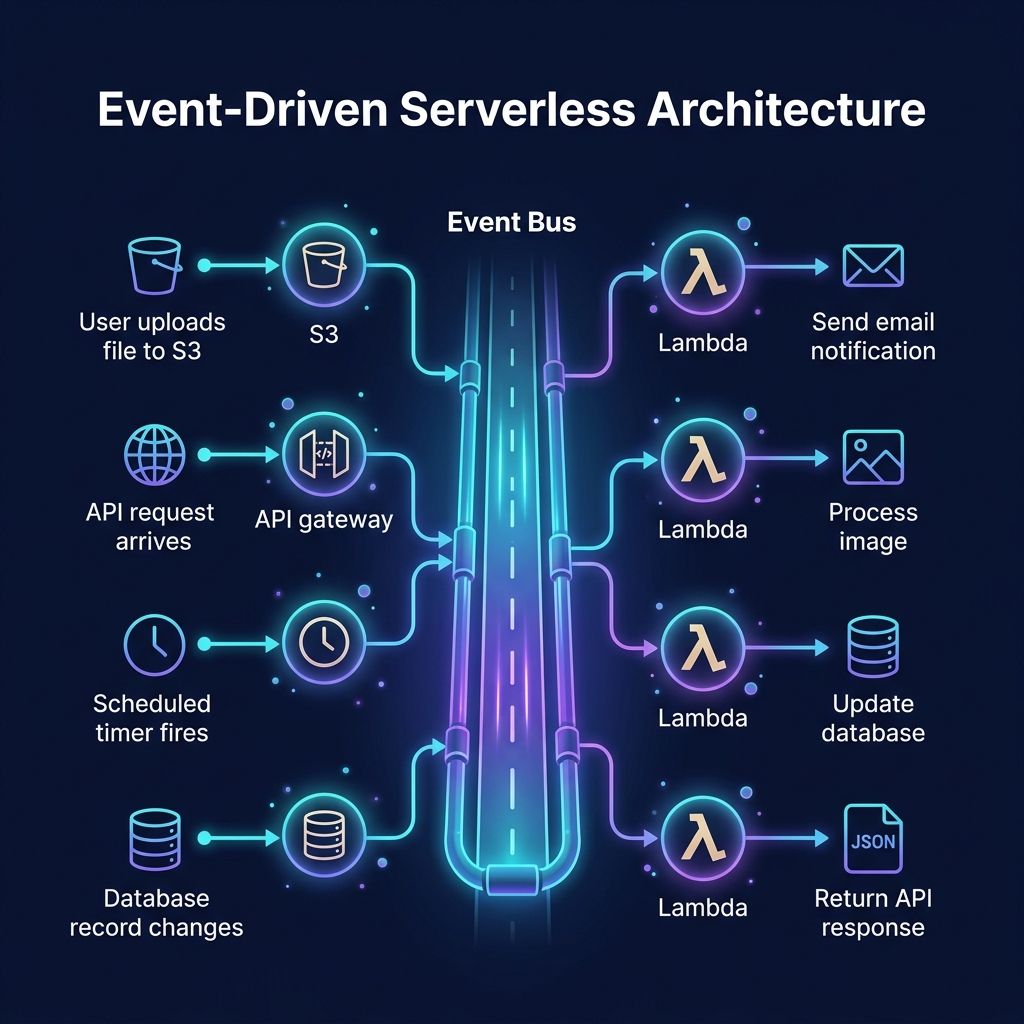

Because everything in Serverless is built out of these tiny, temporary functions, the architecture looks very different from a traditional system.

It becomes an Event-Driven Architecture. Instead of one central program giving orders, you have a giant nervous system where different services react to events as they happen.

In an event-driven system, functions react seamlessly to changes, working together like a symphony.

In an event-driven system, functions react seamlessly to changes, working together like a symphony.

Think of it like a chain reaction factory:

- A user uploads an image to cloud storage.

- Event! The storage bucket yells, "I got a new file!"

- The

ImageResizerfunction hears this, wakes up, compresses the image, and saves it. - Event! The

ImageResizeryells, "I made a smaller image!" - The

DatabaseUpdaterfunction hears this, wakes up, and records the new image URL in the database.

It is a deeply uncoupled, highly scalable way to build software. If 10,000 users upload an image at the exact same second, the cloud provider simply wakes up 10,000 copies of your ImageResizer function simultaneously. No servers crash, no systems slow down.

5. The Trade-offs: The Cold Start Problem

If Serverless is so amazing, why doesn't everyone use it for everything?

Remember earlier when we mentioned the "magical food truck" that pops into existence? Well, popping into existence takes a tiny bit of time.

If a function hasn't been used recently, the cloud provider will tear down its container to save resources. When the next request comes in, the system has to find an empty server, download your code, configure the environment, and load your program into memory before it can actually run.

This is called a Cold Start.

Cold starts add latency to your first request.

Cold starts add latency to your first request.

If your car is already running (a Warm Start), hitting the gas makes you move instantly. If your car is cold in the garage, it takes time to open the door, turn the key, let the engine catch, and finally shift into drive.

For many applications, a cold start delay of a few hundred milliseconds is completely unnoticeable. But if you are building an ultra-low-latency high-frequency trading platform, or a fast-action multiplayer game, that slight hesitation can be a dealbreaker.

The Verdict

Serverless represents a monumental shift in how we think about computing. By giving up control over the physical infrastructure, engineers gain the ability to scale infinitely without actively managing servers, and businesses gain a pricing model that perfectly matches their actual usage.

Just like moving from physical servers to the Cloud, Serverless isn't just a different way to host code—it's a fundamentally different way to design and build systems.